Researchers in Rice University’s George R. Brown School of Engineering—a leader in bioengineering, computer science, and statistics—leveraged their combined strengths to secure a new National Science Foundation (NSF) grant for developing principled phylogenetic analysis methods. The grant, Scalable Bayesian Inference with Applications to Phylogenetics, will receive funding in excess of $890,000.

“Phylogenetics uses a graph, frequently a tree-like diagram, as a model to understand how species have evolved; using a ‘tree of life’ diagram is a very old concept dating back hundreds of years. Today that diagram is being continually revised and improved using computational models,” said Huw Ogilvie, the principal investigator for the new Rice grant.

“The way I see it, there are two important reasons to study evolutionary biology through phylogenetics. First, it is important to understand where we come from and what produced our world today. The second reason hinges on the importance of understanding the relationship between species, both broadly and specifically—like how the human genome compares to a mouse genome. That kind of understanding can help us in the field of medicine, where knowing how a mouse responds to a treatment for cancer or other diseases can be used to predict the similarity or dissimilarity of a human’s response to the same treatment.

“Similarly in agriculture, phylogenetics predicts how applicable results in studies of model plants such as Arabidopsis and Brachypodium are important to crops such as canola and wheat. Most recently, we have begun to use phylogenetics to understand the relationship between mutations, cellular proliferation and metastases in cancer.”

Despite the importance of phylogenetics to medicine, natural sciences and other fields, available methods are woefully insufficient. As Ogilvie explains, “There are many ways to implement computational models in phylogenetics, but most existing ones simply can’t scale. These methods are restricted because they only work on a limited number of species or genes and not the vast collection of genomes we have today. If applied to whole genomes, such methods might require 1000 years to finish running or take a computer 1000 times more memory than is currently available. We propose a new approach with much greater efficiency, but one that does not sacrifice the rigor of Bayesian inference.”

Eighteenth century statistician and minister Thomas Bayes developed a theorem to quantify the credibility of an existing hypothesis when new information comes to light. Commonly called Bayesian inference, this approach to statistical analysis helps scholars across sciences and the humanities conduct their research with greater nuance, accuracy, transparency and rigor.

Stansilaw Ulum and John von Neumann invented a general-purpose statistical approach early in the Cold War. Their Monte Carlo method of identifying probable outcomes is now utilized in a variety of fields including finance, engineering, and science. Coupling Monte Carlo with a Markov chain —a probability model that uses an existing state to predict the next state in a sequence— is so prevalent that statisticians simply call the combination “MCMC.”

The MCMC algorithm is already popular and well-understood as a general-purpose tool for Bayesian inference including in phylogenetics, so Ogilvie and his colleagues plan to make dramatic improvements to the efficiency of MCMC to achieve their goal of scalable Bayesian inference. Some of these improvements will likely be applicable beyond phylogenetics, benefiting all quantitative research.

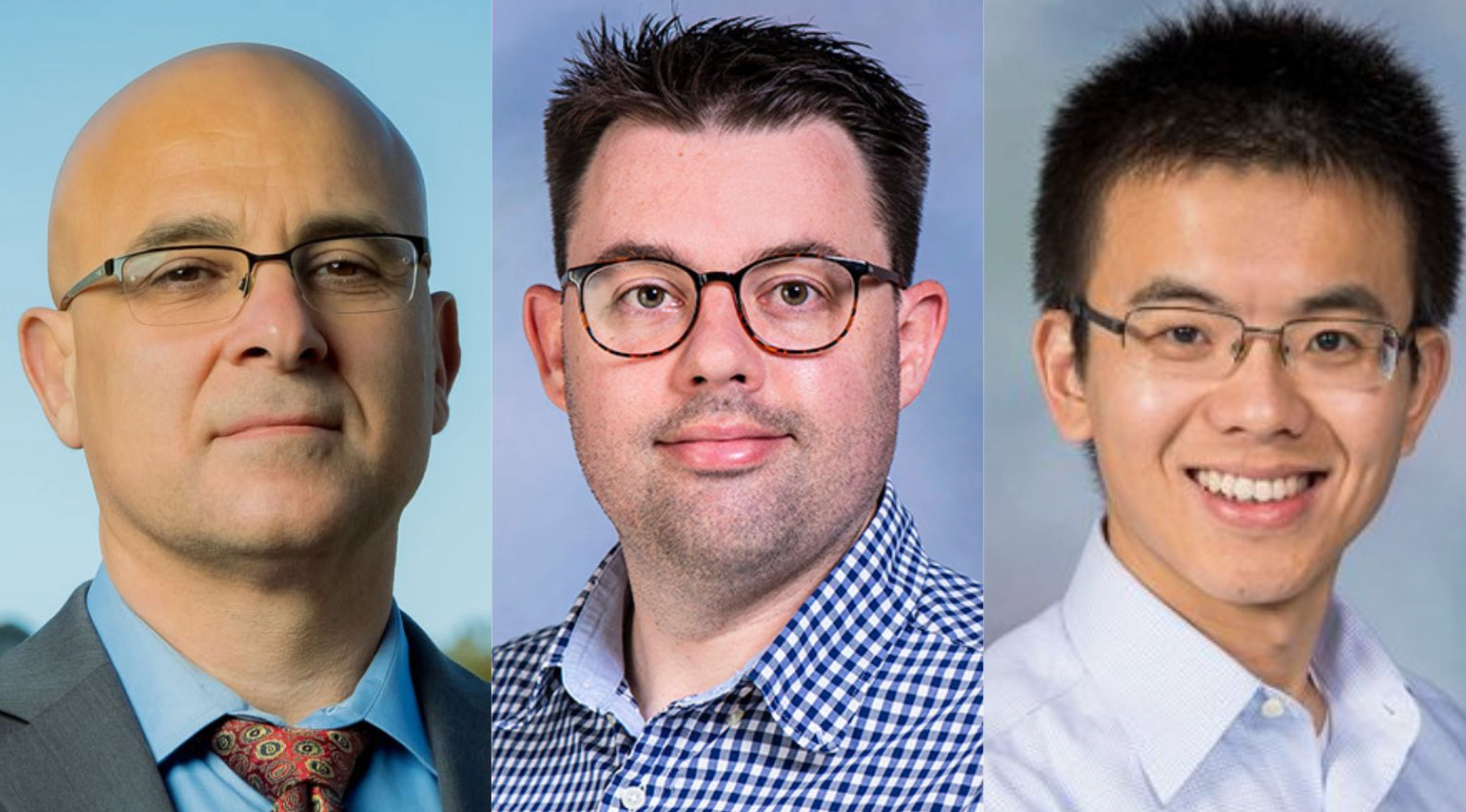

Ogilvie’s passion for developing easily-adoptable and principled phylogenetics analysis models is shared by his Rice colleagues Luay Nakhleh and Meng Li. Nakhleh, the William and Stephanie Sick Dean for Rice’s engineering school, is also a professor of computer science and of biosciences. Li is the Noah Harding Assistant Professor in the Department of Statistics. The professors’ research interests overlap in the area of computational biology.

Nakhleh, whose interest in evolution underscores his research at the intersection of computing and biology, was intrigued by the project because it would enable researchers to conduct analyses at a scale not possible thus far.

He said, “Researchers in phylogenomics are big fans of Bayesian inference because of the amount of information it provides based on the data and because they can incorporate into it their prior knowledge in a systematic way. However, with the large amounts of data that biologists are collecting these days, Bayesian inference is falling out of favor among phylogenomics researchers because of its computational limitations. I am very excited that the work we will do in this project would enable Bayesian analyses at much larger scales and improve their applicability in this domain.”

For Li, it was the statistical challenges of the phylogenetic analysis project that piqued his interest. He said, “Rice has a fantastic interdisciplinary environment and the seed for applying Bayesian inference to phylogenetics was planted in my first year here, when I was invited to join the Ph.D. thesis committee for several of Luay’s grad students who focused on phylogenetics. Since then, we have been exchanging ideas about phylogenetics and statistical models, and one recurrent topic converges on how to scale up the implementation with statistical rigor. I’m excited about working with Luay and Huw in this project to tackle the long-standing scalability issue in phylogenomics by developing statistically sound methods.”

Having explored the application of statistics to a range of fields from medicine and neuroscience to materials science over the last five years, Li is keenly aware of the need for new tools and methods that can match an ever-increasing amount of data as well as a growing number of probabilities.

“Complex data are ubiquitous in modern science in the era of big data,” he said. “This project covers a full range of components in Bayesian inference: new priors, approximate methods for computing complicated likelihoods, efficient posterior sampling, and theoretical guarantees. The tools developed in this project, although motivated by phylogenetics, will be broadly applicable to complex data analysis in wide-ranging areas.”

In addition to developing new tools with wide-ranging applications, Rice’s NSF grant also encompasses a high school teacher research program that Ogilvie will coordinate with Rice’s Center for Education.

“When we talk with college students today about the kind of biology they learn in high school, it becomes apparent that there is a gap between their dissecting frogs and studying taxonomy codes and the kind of quantitative analysis taught in many university-level biology courses,” said Ogilvie.

“This grant is funded by the NSF’s division of mathematical sciences and the human genome center in the National Institutes of Health (NIH); both organizations are interested in leveraging the expanding genomic data we are getting, using it in ways that will increase the value of the data and learn more from it. That means our next generation of genomic researchers will need more math, statistics and computer science expertise.

“We may already embrace that trajectory if we’re a professor at a research institution, but it is equally important to transfer some of our knowledge to high school math and biology teachers. If they can then work together to get their students excited about quantitative biology, that would be fantastic.”

Carlyn Chatfield, contributing writer